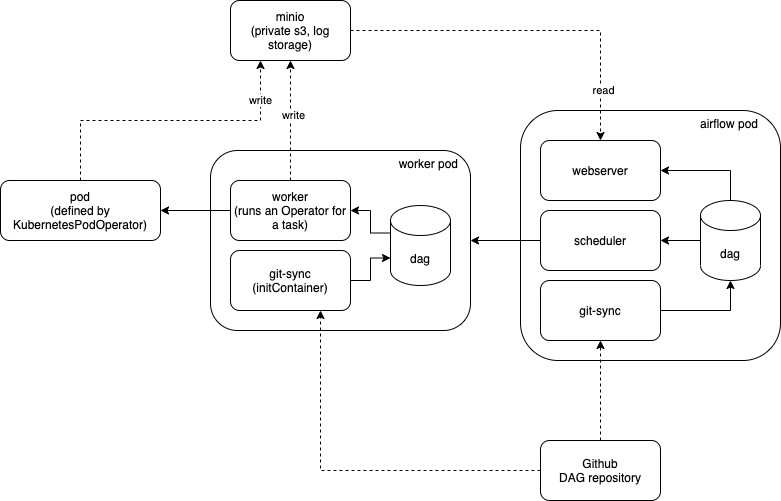

Name ( str) - name of the pod in which the task will run, will be used (plus a random Defaults to ,īut fully qualified URLS will point to custom repositories. Image ( str) - Docker image you wish to launch. Namespace ( str) - the namespace to run within kubernetes. KubernetesPodOperator ( *, namespace : Optional = None, image : Optional = None, name : Optional = None, cmds : Optional ] = None, arguments : Optional ] = None, ports : Optional ] = None, volume_mounts : Optional ] = None, volumes : Optional ] = None, env_vars : Optional ] = None, env_from : Optional ] = None, secrets : Optional ] = None, in_cluster : Optional = None, cluster_context : Optional = None, labels : Optional = None, reattach_on_restart : bool = True, startup_timeout_seconds : int = 120, get_logs : bool = True, image_pull_policy : Optional = None, annotations : Optional = None, resources : Optional = None, affinity : Optional = None, config_file : Optional = None, node_selectors : Optional = None, node_selector : Optional = None, image_pull_secrets : Optional ] = None, service_account_name : Optional = None, is_delete_operator_pod : bool = False, hostnetwork : bool = False, tolerations : Optional ] = None, security_context : Optional = None, dnspolicy : Optional = None, schedulername : Optional = None, full_pod_spec : Optional = None, init_containers : Optional ] = None, log_events_on_failure : bool = False, do_xcom_push : bool = False, pod_template_file : Optional = None, priority_class_name : Optional = None, pod_runtime_info_envs : List = None, termination_grace_period : Optional = None, configmaps : Optional = None, ** kwargs ) ¶Īnd Airflow is not running in the same cluster, consider using " Contents ¶ class .operators.kubernetes_pod.

MainApplicationFile: "local:///opt/spark/examples/jars/spark-examples_2.12-3.4.0.jar" # See the License for the specific language governing permissions andĪpiVersion: "/v1beta2"

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # distributed under the License is distributed on an "AS IS" BASIS, # Unless required by applicable law or agreed to in writing, software # You may obtain a copy of the License at # you may not use this file except in compliance with the License.

Sample YAML file : # Copyright 2017 Google LLC # Licensed under the Apache License, Version 2.0 YAML file will create driver and executor pod to run spark jobĪirflow Task: spark_operator = SparkKubernetesOperator(Īpplication_file='spark_app_config.yaml', In airflow we can use "SparkKubernetesOperator" and provide spark job details in ".yaml" file. Airflow SparkSubmitOperator - How to spark-submit in another serverĪs of 2023, we have new option to run spark job on kubernetes using "SparkKubernetesOperator".Note: for each of the operators you need to ensure that your Airflow environment contains all the required dependencies for execution as well as the credentials configured to access the required services. HttpOperator + Livy on Kubernetes, you spin up Livy server on Kubernetes, which serves as a Spark Job Server and provides REST API to be called by Airflow HttpOperator KubernetesPodOperator, creates Kubernetes pod for you, you can launch your Driver pod directly using it.SparkSubmitOperator, the specific operator to call spark-submit.You may run kubectl or spark-submit using it directly BashOperator, which executes the given bash script for you.There are several operators that you can take use of: In your example PythonOperator is used, which simply executes Python code and most probably not the one you are interested in, unless you submit Spark job within Python code. Now, in case I want to run a spark submit job, what should I do?Īirflow has a concept of operators, which represent Airflow tasks. In this case the above methods will get executed on after the other once I play the DAG. T2 = PythonOperator(task_id='multitask2', python_callable=print_text, dag=dag) T1 = PythonOperator(task_id='multitask1', python_callable=print_text1, dag=dag) This is the first time I am using airflow and I am unable to figure out how I can add my Yaml file in the airflow.įrom what I have read is that I can schedule my jobs via a DAG in Airflow.Ī dag example is this : from airflow.operators import PythonOperatorĪrgs = ĭag = DAG('test_dag', default_args = args, catchup=False) Now, I want to schedule my spark jobs via airflow. Till now I was using an Yaml file to run my jobs manually. I have a spark job that runs via a Kubernetes pod.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed